Using .NET, Angular, Kubernetes, Azure/Devops, Terraform, Eventhubs and other Azure resources.

This is one part of a series. So if you have not read the PART 0: OVERVIEW you can go there and read it to get an overview of what we will actually doing here …

Introduction

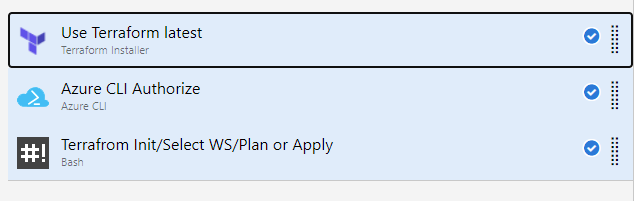

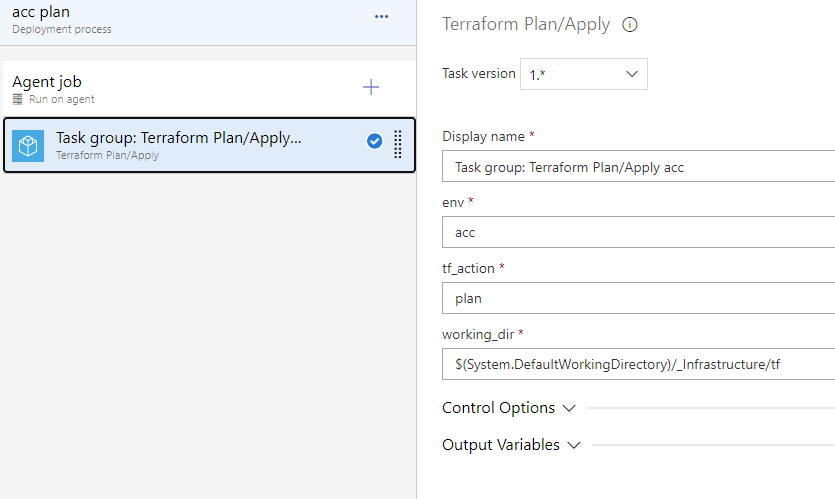

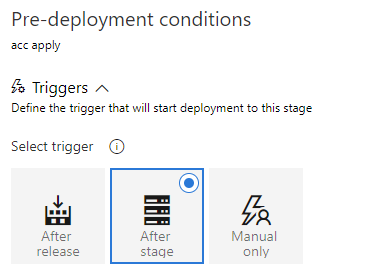

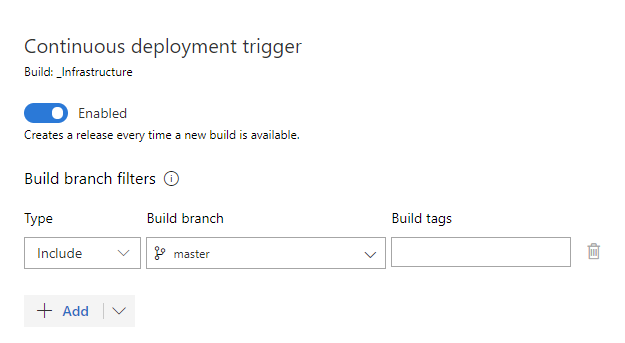

In the last PART 1.1: INFRASTRUCTURE PIPELINE we have finalized the infrastructure part with building our pipeline for it.

Now we are going further and start the web api for our notification application environment. We will build the web api in .net core. The web api provides two endpoints. One for creating a message and another for getting all messages. This is sufficient for our example. Furthermore we implementing the use of azure storage table, event hubs, application insights and key vaults.

Yes – I repeat myself, but all the code here is not really complete production ready (error handling, retries, tests, etc.). Maybe I will put some more features (if there is anyone interested in) to all parts of this application, when we are ready with our base version. But our base versions has already a lot of stuff in there, so lets start…

Prerequisites

We only need an editor or development environment for .net and the .net core 3.1 framework for creating a .net core application (But I think this is obvious!). And we need the “Common lib” for our .net projects which will be described below.

Common lib

We need the common lib for this part as well as for our workers (next parts!) which should handle the sent notifications. The common lib source can be downloaded/cloned from the feature/part2 branch and put it next to the infrastructure folder. I will not list all the code here, but I will give a short overview about the content and describe the folders below.

Data

In this folder is the notification entity located which are saving in a azure storage table. For this there is also a repository, which take the job for communicate with storage table. (Please read the code for getting more information how this work in detail and or visit https://docs.microsoft.com/en-us/azure/cosmos-db/tutorial-develop-table-dotnet – and do not be confused that we are using the cosmos db api. This api is working with azure storage table.)

Extensions

One extension is to register to application insights and the other one for using the key vaults where we get later our secrets from. The key vault credentials will be pushed via environment variables (but this is part of the next part – 🙂).

Protobuf/Messages

The protobuf folder contains the notification message which we will be used to send/receive to/from our eventhub. I choose to send the message via this binary format. The messages folder contains the C# version of the message (which we will use in our code). If you take a look at the “Notifier.Common.csproj” fill you will find an item group which take this generation job (for this the gprc tool will be used).

Settings

Here are all settings defined as objects, which we will use in our needed .net projects.

WebApi

Again we should here create first a repository for the web api where we can push our code for creating the pipelines etc. in the next part. So let’s create an azure devops repository named “WebApi” in our “Infrastructure” project and clone this next to the “Infrastructure” and “Common” folders. The complete sourcecode can be also downloaded/cloned here.

Base Setup

Now create a .net core console project and name it (incl. the solution) “Notifier.WebApi”. We start by editing the “csproj” file. So replace/edit your contents with the following.

One interesting thing in the first property group is the “UserSecretsId”. So yes we use for local development the user secrets feature. So no secrets has to be in the repository. (The user secret will be created when you right click for the first time the “project -> manage user secrets”. Here we will do it directly in the project file and a good thing is to add the application name to the secret. Else it is very hard find it on your computer.)

The next item group is obvious. The “appsettings.json” which we will create very soon!

Then we have some nuget packages we will need for our web api and last but not least including the common lib as a project reference (make sure the path is right in your environment). A better approach would be to add the common lib as a git submodule or nuget package, but for now this is ok here.

It is time to create the “appsettings.json” file in the project root. Please put the content below into that file.

First we configure the log level for our logging. Then we see settings for our azure resources. These are secrets, so we will define them in our user secrets file later. And at last the core settings which is for our later angular frontend to access the the web api without cors issues.

Let’s go to our user secrets file. For this right click the project and select “Manage User Secrets”. If the file does not open (sometimes with older .net core versions I had the issue) you could do it by using the .net core cli or simply open the file located in “C:\Users\{YOUR_USER}\AppData\Roaming\Microsoft\UserSecrets\notifier-webapi-6fd34aeb-1b78-4492-86dd-a5aa00ce38cd” then put the following in there and find your secrets in your azure portal.

We use here the secrets from our acceptance environment. Here can you find your secrets…

- Application Insights – Instrumentation Key: select notifier-application-insights-acc resource -> Overview and find the key on the top right.

- Storage Table – Connection String: select notifierstoreacc resource -> Access keys and then copy the primary connection string.

- Event Hubs – Connection String: select eventhubs-acc -> Event Hubs -> notifications -> Shared access policies -> send and copy the primary connection string.

Implementation

We start by directly implementing the functionality of the api. Later we wire this together when we setup the “Program.cs” and “Startup.cs”. we do this in this order, because else we have to jump between files and/or have to deal with errors because the things we want to wire are not existent at this time…

So let’s start by creating the model for the notification response. Please create a folder “Models” in the root project directory and create a class named “NotificationModel.cs” and put the following in to it.

The model only contains a message and a timestamp. So let’s go further with the services. For this create a folder named “Services” and create the following in files in there:

Above the service interface (“INotificationService”) with two simple methods in there. And now the implementation (“NotificationService”):

The constructor takes arguments for the logger, eventhub settings (object come from the common lib) and the repository which is also located in the common lib. With this we have all to start here. The “CreateAndSendAsync” does exactly what it is called. First it creates the entity and save it into the table and second it sends to the event hub. The functionality is split into two private methods which makes it more cleaner and better to read. Please check the private methods and the common lib functionality for further information how the event hubs and storage table is used here. (This is a very simple implementation herem without retries etc.)

Now we are ready to create the controller which defines our endpoints. So we start again with creating a folder “Controllers” and create a class named “NotificationController.as” in there and put the following code into it.

We inject the notification service here to let the service do our work. The controller creates the endpoint and format the response for our two methods. So we created here the following endpoints:

- GET /api/notifications – returns all notifications

- POST /api/notifications – create, save and send the notification

This is all we need for the logic! Now we need to wire this together…

Wiring the Parts

We start with the “Program.cs” where the entry point of the application is. Please open that file and replace the content with the following.

We start creating the host when the main function is called. At first we configuring the app by calling “ConfigureAppConfiguration”. We add the appsettings.json, environment variables and commandline arguments to our configuration. The we add (or try to add) the user secrets (which is the case in local development). Then we add (or try to add) the key vault (which is the case when we pass the credentials for it via environment variables – but we will discuss this in the next chapter). All the secrets will be replaced by user secrets or key vault. Next we configure our logging. First we register our console logger and second to log in application insights (which results in traces there). And in the last step we configure our web server where we will use “Kestrel” and call our web server startup class “Startup.cs” which contains the following.

Here we will do some startup stuff, but the main focus should be how configure our services. In line 45 we start configuring our app settings, so we can inject them with the IOptions<T> interface in our services, etc. Then we add the repository, and the notification service to our di context. You will find some other basic configuration here, which I will not describe here in detail.

Test

If we have all done here correct, you could run the application locally and test the endpoints. You could use postman or like me the the REST Client (for visual studio code plugin), which is quiet cool, because I can code my requests here and versioning them, etc. Following the requests:

So and if you have created some messages and get them you could check application insights on azure portal and view for example the logs (notifier-application-insights-acc -> Logs -> traces). Or check the application map, which should should show the connection between the components. At this time we can see that our web api sends to event hub and calls to to the azure table.

Conclusion

We have created a .net core web api here which cares about secrets and uses diverse azure resources like event hubs, application insights, key vaults and azure tables. We can now create, persist and read/receive notifications.

Preview

In the next PART 2.1, we will bring the web api to our acceptance stage running in docker and the kubernetes cluster. And this we want to integrate and automate in the azure pipelines.